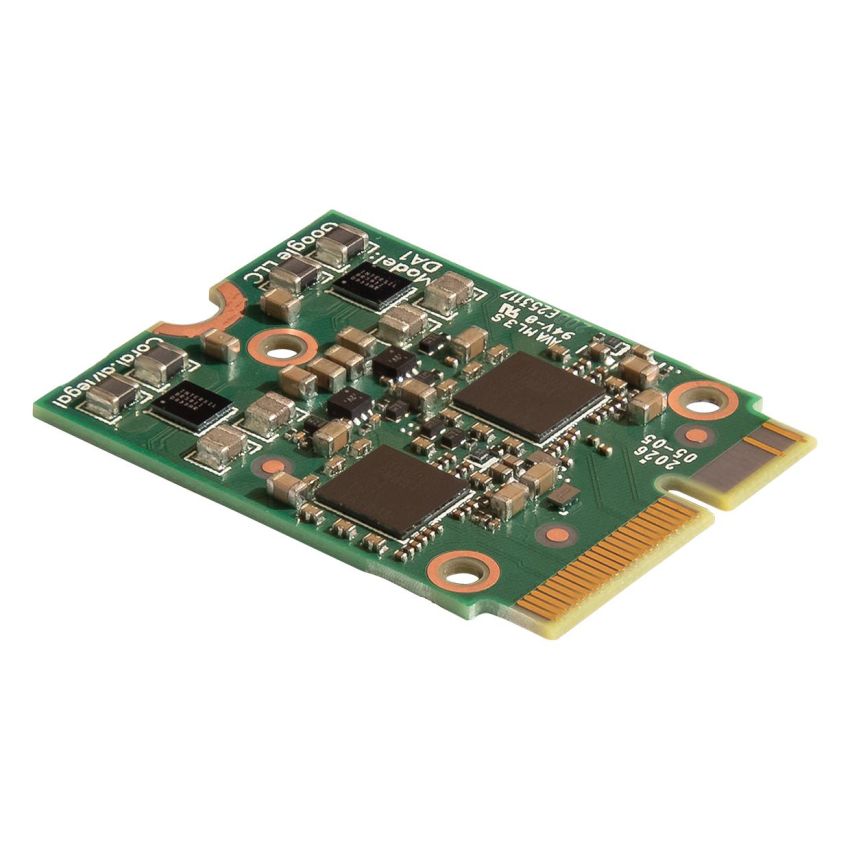

The Coral M.2 Accelerator with Dual Edge TPU is a high-performance AI acceleration module designed to deliver powerful edge computing capabilities for advanced embedded and industrial applications. Built with two Google Edge TPU processors, this compact M.2 module enables efficient and ultra-fast machine learning inference directly on-device, eliminating the need for cloud processing and ensuring low latency and enhanced data privacy.

Engineered for demanding AI workloads, this accelerator provides a combined performance of up to 8 trillion operations per second (TOPS), making it ideal for running multiple machine learning models simultaneously or handling high-throughput inference tasks. Each Edge TPU operates independently, allowing developers to either run parallel models or pipeline workloads across both processors for maximum efficiency.

Designed in an M.2 2230 form factor with an E-key interface, the module integrates seamlessly into compatible embedded systems, industrial PCs, and edge computing platforms. It connects via dual PCIe Gen2 x1 interfaces, ensuring reliable high-speed communication between the host system and the AI processors.

The Coral M.2 Accelerator is optimized for TensorFlow Lite models and is widely used in applications such as computer vision, smart surveillance systems, industrial automation, robotics, and AI-driven analytics. Its ability to perform real-time inference at the edge reduces system dependency on cloud infrastructure, improving reliability and lowering operational costs.

With its industrial-grade design, wide operating temperature range, and high-performance efficiency, this module is a powerful solution for developers and businesses looking to deploy scalable AI solutions in real-world environments.

Key Features:

- Dual Google Edge TPU processors delivering up to 8 TOPS combined AI performance for high-speed inference.

- Supports parallel model execution or pipelined processing for enhanced AI workload efficiency.

- M.2 2230 E-key form factor enables seamless integration into embedded and industrial systems.

- Dual PCIe Gen2 x1 interface ensures fast and reliable data communication with host devices.

- Optimized for TensorFlow Lite models, ideal for computer vision and edge AI applications.

- Wide operating temperature range (-40°C to +85°C) suitable for industrial environments.

REQUEST FOR QUOTATION

REQUEST FOR QUOTATION